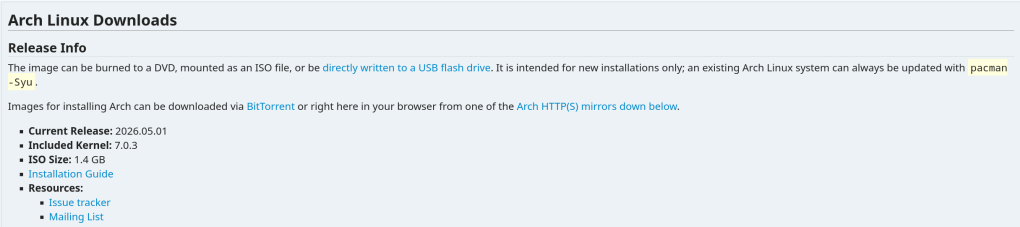

Comes with the 7.0.3 Linux Kernel.

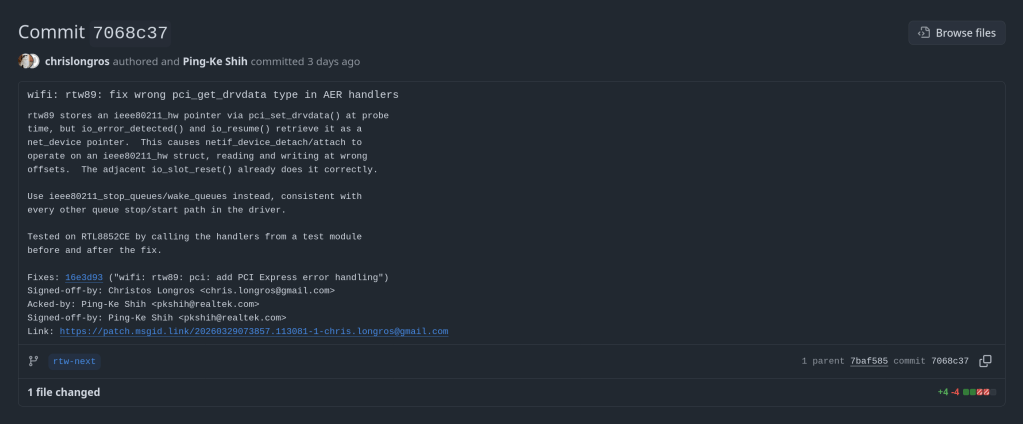

My commit got merged to the rtw89 Realtek linux kernel driver

https://github.com/pkshih/rtw/commit/7068c379cf9aa8afe4dce4d9d82390187aa9c4d0

If you’re trying to run VirtualBox on a Linux host with an AMD CPU and you get this error:

VirtualBox can't enable the AMD-V extension. Please disable the KVM kernel extension, recompile your kernel and reboot (VERR_SVM_IN_USE).The problem is that KVM has claimed the AMD-V virtualization extension, and VirtualBox can’t use it at the same time.

This issue has become more common with Linux kernel 6.12 and later. The KVM modules are now loaded more aggressively at boot, even if you’re not using KVM. If you recently updated your kernel (e.g., to 6.14+ on Arch Linux) and VirtualBox stopped working, this is likely the cause.

You don’t need to recompile anything. Just unload the KVM modules:

sudo modprobe -r kvm_amd

sudo modprobe -r kvmThen start your VirtualBox VM — it should work.

If you want KVM to never load automatically (so VirtualBox always works), blacklist the modules:

echo "blacklist kvm_amd" | sudo tee /etc/modprobe.d/blacklist-kvm.conf

echo "blacklist kvm" | sudo tee -a /etc/modprobe.d/blacklist-kvm.confIf you need KVM again (e.g., for QEMU), simply reload the modules:

sudo modprobe kvm

sudo modprobe kvm_amdYou can only use one hypervisor at a time — VirtualBox or KVM, not both simultaneously. That’s a hardware limitation of AMD-V/SVM.

An annoying problem that I always had while running FreeBSD on my Dell Inspiron 15 5510 is the high temperature and fan speed. Doesn’t matter if it’s a live medium or post-installation — the average CPU temp is over 60 degrees Celsius.

With FreeBSD 15.0 (released December 2025) and the FreeBSD Foundation’s laptop project, things have improved significantly. Here’s what works.

First, load the Intel Core temperature sensor driver:

kldload -v coretempThen check the temperature with:

sysctl dev.cpu | grep temperatureTo load this automatically at boot, add to /boot/loader.conf:

coretemp_load="YES"FreeBSD ships with powerd, a daemon that dynamically adjusts CPU frequency based on load. Enable it in /etc/rc.conf:

powerd_enable="YES"

powerd_flags="-a hiadaptive -b adaptive"This sets the CPU to hiadaptive mode on AC power (favors performance but still scales) and adaptive on battery (favors power saving).

Enable deeper CPU sleep states by adding to /etc/sysctl.conf:

hw.acpi.cpu.cx_lowest=CmaxFreeBSD 15 brings new power management features from the Foundation’s laptop initiative:

For laptops, suspend/resume support can be tested with:

acpiconf -s 3If it works, you can bind it to your laptop lid by adding to /etc/sysctl.conf:

hw.acpi.lid_switch_state=S3After applying these changes, my Dell Inspiron dropped from a constant 60+ degrees down to around 40-45 degrees at idle, and the fan became much quieter. The combination of powerd with proper C-state configuration makes a noticeable difference on FreeBSD laptops.

More details: coretemp(4), powerd(8), FreeBSD Wiki: Tuning Power Consumption

If you’ve ever rebooted a machine and NTP refused to sync because the clock drifted too far, you’ve hit the panic threshold. By default, ntpd will exit if the offset exceeds 1000 seconds.

Add this to /etc/ntp.conf:

tinker panic 0Setting panic 0 disables the panic threshold entirely, allowing ntpd to correct any offset regardless of size.

If you don’t want to permanently disable the panic threshold, you can do a one-time force sync with:

ntpd -gqThe -g flag allows the first adjustment to be any size, and -q makes ntpd set the time and exit.

The ArchZFS project has moved its official package repository from archzfs.com to GitHub Releases. Here’s how to migrate — and why this matters for Arch Linux ZFS users.

If you run ZFS on Arch Linux, you almost certainly depend on the ArchZFS project for your kernel modules. The project has been the go-to source for prebuilt ZFS packages on Arch for years, saving users from the pain of building DKMS modules on every kernel update.

The old archzfs.com repository has gone stale, and the project has migrated to serving packages directly from GitHub Releases. The packages are built the same way and provide the same set of packages — the only difference is a new PGP signing key and the repository URL.

If you’re currently using the old archzfs.com server in your /etc/pacman.conf, you need to update it. There are two options depending on your trust model.

The PGP signing system is still being finalized, so if you just want it working right away, you can skip signature verification for now:

pacman.conf[archzfs]

SigLevel = Never

Server = https://github.com/archzfs/archzfs/releases/download/experimental

For proper package verification, import the new signing key first:

bash# pacman-key --init

# pacman-key --recv-keys 3A9917BF0DED5C13F69AC68FABEC0A1208037BE9

# pacman-key --lsign-key 3A9917BF0DED5C13F69AC68FABEC0A1208037BE9

Then set the repo to require signatures:

pacman.conf[archzfs]

SigLevel = Required

Server = https://github.com/archzfs/archzfs/releases/download/experimental

After updating your config, sync and refresh:

bash# pacman -Sy

The repository provides the same package groups as before, targeting different kernels:

| Package Group | Kernel | Use Case |

|---|---|---|

archzfs-linux | linux (default) | Best for most users, latest stable OpenZFS |

archzfs-linux-lts | linux-lts | LTS kernel, better compatibility |

archzfs-linux-zen | linux-zen | Zen kernel with extra features |

archzfs-linux-hardened | linux-hardened | Security-focused kernel |

archzfs-dkms | Any kernel | Auto-rebuilds on kernel update, works with any kernel |

Hosting a pacman repository on GitHub Releases is a clever approach. GitHub handles the CDN, availability, and bandwidth — no more worrying about a single server going down and blocking ZFS users from updating. The build pipeline uses GitHub Actions, so packages are built automatically and transparently. You can even inspect the build scripts in the repository itself.

The trade-off is that the URL is a bit unwieldy compared to the old archzfs.com/$repo/$arch, but that’s a minor cosmetic issue.

The project labels this as experimental and advises starting with non-critical systems. In practice, the packages are the same ones the community has been using — the “experimental” label applies to the new distribution method, not the packages themselves. Still, the PGP signing system is being reworked, so you may want to revisit your SigLevel setting once that’s finalized.

archzfs.com repository is stale and will not receive updates. If you haven’t migrated yet, do it now — before your next pacman -Syu pulls a kernel that your current ZFS modules don’t support, leaving you unable to import your pools after reboot.

For full details and ongoing updates, check the ArchZFS wiki and the release page.

A kernel-to-userspace patch that replaces a vague zpool create error with one that names the exact device and pool causing the problem. Here’s how it works, from the ioctl layer to the formatted error message.

If you’ve managed ZFS pools with more than a handful of disks, you’ve almost certainly hit this error:

bash$ sudo zpool create tank mirror /dev/sda /dev/sdb /dev/sdc /dev/sdd

cannot create 'tank': one or more vdevs refer to the same device,

or one of the devices is part of an active md or lvm deviceWhich device? What pool? The error gives you nothing. In a 12-disk server you’re left checking each device one by one until you find the culprit.

I’d been working on a previous PR (#18184) improving zpool create error messages when Brian Behlendorf suggested a follow-up: pass device-specific error information from the kernel back to userspace, following the existing ZPOOL_CONFIG_LOAD_INFO pattern that zpool import already uses.

So I built it. The result is PR #18213:

| Error message | |

|---|---|

| Before | cannot create 'tank': one or more vdevs refer to the same device |

| After | cannot create 'tank': device '/dev/sdb1' is part of active pool 'rpool' |

The obvious approach would be: when zpool create fails, walk the vdev tree, find the device with the error, and report it. But there’s a timing problem in the kernel that makes this impossible.

When spa_create() fails, the error cleanup path calls vdev_close() on all vdevs. This function unconditionally resets vd->vdev_stat.vs_aux to VDEV_AUX_NONE on every device in the tree. By the time the error code reaches the ioctl handler, all evidence of which device failed and why has been wiped clean.

vdev_label_init(), before the cleanup path destroys it. And it must be stored somewhere that survives the cleanup — the spa_t struct, which represents the pool itself.

The only errno that travels back through the ioctl is an integer like EBUSY. No context about which device, no pool name, nothing. The entire design challenge is getting two strings (a device path and a pool name) from a kernel function that runs during vdev initialization all the way back to the userspace zpool command.

The solution follows the same mechanism that zpool import already uses to return rich error information: an nvlist (ZFS’s key-value dictionary, like a JSON object) packed into the ioctl output buffer under a well-known key.

Four touch points, each doing one small thing. Let’s walk through them.

This is the heart of the change. Inside vdev_label_init(), when vdev_inuse() returns true, we build an nvlist with the device path, then read the on-disk label to extract the pool name:

module/zfs/vdev_label.c/*

* Determine if the vdev is in use.

*/

if (reason != VDEV_LABEL_REMOVE && reason != VDEV_LABEL_SPLIT &&

vdev_inuse(vd, crtxg, reason, &spare_guid, &l2cache_guid)) {

if (spa->spa_create_errlist == NULL) {

nvlist_t *nv = fnvlist_alloc();

nvlist_t *cfg;

if (vd->vdev_path != NULL)

fnvlist_add_string(nv,

ZPOOL_CREATE_INFO_VDEV, vd->vdev_path);

cfg = vdev_label_read_config(vd, -1ULL);

if (cfg != NULL) {

const char *pname;

if (nvlist_lookup_string(cfg,

ZPOOL_CONFIG_POOL_NAME, &pname) == 0)

fnvlist_add_string(nv,

ZPOOL_CREATE_INFO_POOL, pname);

nvlist_free(cfg);

}

spa->spa_create_errlist = nv;

}

return (SET_ERROR(EBUSY));

}The NULL check on spa_create_errlist ensures we only record the first failing device. If there are multiple conflicts, the first one is what you need to fix anyway. fnvlist_alloc() and fnvlist_add_string() are the “fatal” nvlist functions that panic on allocation failure — appropriate here since we’re in a code path where memory should be available.

On error, spa_create() transfers ownership of the errlist via the new errinfo output parameter:

module/zfs/spa.cif (error != 0) {

if (errinfo != NULL) {

*errinfo = spa->spa_create_errlist;

spa->spa_create_errlist = NULL;

}

spa_unload(spa);

spa_deactivate(spa);

spa_remove(spa);

...Setting spa_create_errlist to NULL after the handoff prevents spa_deactivate() from freeing it — ownership transfers to the caller.

The ioctl handler wraps the errlist under a ZPOOL_CONFIG_CREATE_INFO key, mirroring how zpool import uses ZPOOL_CONFIG_LOAD_INFO:

module/zfs/zfs_ioctl.cerror = spa_create(zc->zc_name, config, props, zplprops, dcp,

&errinfo);

if (errinfo != NULL) {

nvlist_t *outnv = fnvlist_alloc();

fnvlist_add_nvlist(outnv,

ZPOOL_CONFIG_CREATE_INFO, errinfo);

(void) put_nvlist(zc, outnv);

nvlist_free(outnv);

nvlist_free(errinfo);

}put_nvlist() serializes the nvlist into zc->zc_nvlist_dst, which is a shared buffer between kernel and userspace.

In libzfs, after the ioctl fails, we unpack the buffer, extract the device and pool name, and format the error:

lib/libzfs/libzfs_pool.cnvlist_t *outnv = NULL;

if (zc.zc_nvlist_dst_size > 0 &&

nvlist_unpack((void *)(uintptr_t)zc.zc_nvlist_dst,

zc.zc_nvlist_dst_size, &outnv, 0) == 0 &&

outnv != NULL) {

nvlist_t *errinfo = NULL;

if (nvlist_lookup_nvlist(outnv,

ZPOOL_CONFIG_CREATE_INFO, &errinfo) == 0) {

const char *vdev = NULL;

const char *pname = NULL;

(void) nvlist_lookup_string(errinfo,

ZPOOL_CREATE_INFO_VDEV, &vdev);

(void) nvlist_lookup_string(errinfo,

ZPOOL_CREATE_INFO_POOL, &pname);

if (vdev != NULL) {

if (pname != NULL)

zfs_error_aux(hdl,

dgettext(TEXT_DOMAIN,

"device '%s' is part of "

"active pool '%s'"),

vdev, pname);

else

zfs_error_aux(hdl,

dgettext(TEXT_DOMAIN,

"device '%s' is in use"),

vdev);

...

}

}

}If both values are available, you get: device ‘/dev/sdb1’ is part of active pool ‘rpool’. If only the path is available (label can’t be read), you get: device ‘/dev/sdb1’ is in use. If no errinfo came back at all, the existing generic error handling kicks in unchanged.

| File | + | − |

|---|---|---|

module/zfs/vdev_label.c | +23 | -1 |

lib/libzfs/libzfs_pool.c | +41 | |

module/zfs/zfs_ioctl.c | +12 | -1 |

module/zfs/spa.c | +10 | -1 |

cmd/ztest.c | +5 | -5 |

include/sys/fs/zfs.h | +3 | |

include/sys/spa.h | +1 | -1 |

include/sys/spa_impl.h | +1 | |

tests/.../zpool_create_errinfo_001_neg.ksh | +99 | |

| 11 files total | +195 | -10 |

93 lines of feature code across 8 C files, plus a 99-line ZTS test. The cmd/ztest.c changes are mechanical — just adding a NULL parameter to each spa_create() call to match the new signature.

I tested on an Arch Linux VM running kernel 6.18.9-arch1-2 with ZFS built from source. The test environment used loopback devices, which is the standard approach in the ZFS Test Suite — the kernel code path is identical regardless of the underlying block device.

bash$ truncate -s 128M /tmp/vdev1

$ sudo losetup /dev/loop10 /tmp/vdev1

$ sudo losetup /dev/loop12 /tmp/vdev1 # same backing file

$ sudo zpool create testpool1 mirror /dev/loop10 /dev/loop12

cannot create 'testpool1': device '/dev/loop12' is part of active pool 'testpool1'bash$ truncate -s 128M /tmp/vdev1 /tmp/vdev2

$ sudo zpool create testpool1 mirror /tmp/vdev1 /tmp/vdev2

$ sudo zpool status testpool1

pool: testpool1

state: ONLINE

config:

NAME STATE READ WRITE CKSUM

testpool1 ONLINE 0 0 0

mirror-0 ONLINE 0 0 0

/tmp/vdev1 ONLINE 0 0 0

/tmp/vdev2 ONLINE 0 0 0A new negative test (zpool_create_errinfo_001_neg) creates two loopback devices backed by the same file and attempts a mirror pool creation. It verifies three things: the command fails, the error names the specific device, and the error mentions the active pool.

ZTS$ zfs-tests.sh -vx -t cli_root/zpool_create/zpool_create_errinfo_001_neg

Test: zpool_create_errinfo_001_neg (run as root) [00:00] [PASS]

Results Summary

PASS 1

Running Time: 00:00:00

Percent passed: 100.0%CI checkstyle passes on all platforms (Ubuntu 22/24, Debian 12/13, CentOS Stream 9, AlmaLinux 8/10, FreeBSD 14). Clean build with no compiler warnings.

Only the first failing device is recorded. If multiple vdevs conflict, only the first one goes into spa_create_errlist. You need to fix the first problem before you can see the next one anyway, and it keeps the implementation simple.

The label is read twice. vdev_inuse() already reads the on-disk label and frees it before returning. We read it again with vdev_label_read_config() to extract the pool name. Modifying vdev_inuse() to optionally return the label would avoid this, but changing that function signature affects many callers — a much larger change for a follow-up.

The errlist field lives on spa_t permanently. It’s only used during spa_create(), but the field exists on every pool in memory. This costs 8 bytes per pool (one pointer, always NULL during normal operation) — negligible.

Only one error path is covered. The mechanism only fires for the vdev_inuse() EBUSY case inside vdev_label_init(). Other failures (open errors, size mismatches) still produce generic messages. The spa_create_errlist infrastructure is there for future extension.

This is a focused first step. The spa_create_errlist mechanism could be extended to cover more error paths — vdev_open() failures, size mismatches, GUID conflicts. The infrastructure is in place; it just needs more callsites.

The PR is at openzfs/zfs #18213. Feedback welcome.

A journey through packaging Python libraries for spaced repetition and Anki deck generation across multiple platforms.

As someone passionate about both medical education tools and open-source software, I recently embarked on a project to make several useful Python libraries available as native packages for FreeBSD and Arch Linux. This post documents the process and shares what I learned along the way.

Spaced repetition software like Anki has become indispensable for medical students and lifelong learners. However, the ecosystem of tools around Anki—libraries for generating decks programmatically, analyzing study data, and implementing scheduling algorithms—often requires manual installation via pip. This creates friction for users and doesn’t integrate well with system package managers.

My goal was to package three key Python libraries:

The AUR is a community-driven repository for Arch Linux users. Creating packages here involves writing a PKGBUILD file that describes how to fetch, build, and install the software.

The FSRS (Free Spaced Repetition Scheduler) algorithm represents the cutting edge of spaced repetition research. Version 6.x brought significant API changes, including renaming the main FSRS class to Scheduler.

# PKGBUILD for python-fsrs

pkgname=python-fsrs

pkgver=6.3.0

pkgrel=1

pkgdesc="Free Spaced Repetition Scheduler algorithm"

arch=('any')

url="https://github.com/open-spaced-repetition/py-fsrs"

license=('MIT')

depends=('python' 'python-typing_extensions')

makedepends=('python-build' 'python-installer' 'python-wheel' 'python-setuptools')

source=("https://files.pythonhosted.org/packages/source/f/fsrs/fsrs-${pkgver}.tar.gz")

sha256sums=('3abbafd66469ebf58d35a5d5bb693a492e1db44232e09aa8e4d731bf047cd0ae')

build() {

cd "fsrs-$pkgver"

python -m build --wheel --no-isolation

}

package() {

cd "fsrs-$pkgver"

python -m installer --destdir="$pkgdir" dist/*.whl

install -Dm644 LICENSE "$pkgdir/usr/share/licenses/$pkgname/LICENSE"

}

The package is now available at: aur.archlinux.org/packages/python-fsrs

genanki allows developers to create Anki decks programmatically—perfect for generating flashcards from databases, APIs, or other structured data sources.

Package available at: aur.archlinux.org/packages/python-genanki

ankipandas provides a pandas-based interface for reading and analyzing Anki collection databases, enabling data science workflows on your study data.

Package available at: aur.archlinux.org/packages/python-ankipandas

FreeBSD’s ports system is more formal than the AUR, with stricter guidelines and a review process. Ports are submitted via Bugzilla and reviewed by committers before inclusion in the official ports tree.

Creating a FreeBSD port required several steps:

pytest-runner build dependency which doesn’t exist in FreeBSD portsmake and make install in a FreeBSD environmentThe final Makefile:

PORTNAME= genanki

PORTVERSION= 0.13.1

CATEGORIES= devel python

MASTER_SITES= PYPI

PKGNAMEPREFIX= ${PYTHON_PKGNAMEPREFIX}

MAINTAINER= chris.longros@gmail.com

COMMENT= Library for generating Anki decks

WWW= https://github.com/kerrickstaley/genanki

LICENSE= MIT

LICENSE_FILE= ${WRKSRC}/LICENSE.txt

RUN_DEPENDS= ${PYTHON_PKGNAMEPREFIX}cached-property>0:devel/py-cached-property@${PY_FLAVOR} \

${PYTHON_PKGNAMEPREFIX}chevron>0:textproc/py-chevron@${PY_FLAVOR} \

${PYTHON_PKGNAMEPREFIX}frozendict>0:devel/py-frozendict@${PY_FLAVOR} \

${PYTHON_PKGNAMEPREFIX}pystache>0:textproc/py-pystache@${PY_FLAVOR} \

${PYTHON_PKGNAMEPREFIX}pyyaml>0:devel/py-pyyaml@${PY_FLAVOR}

USES= python

USE_PYTHON= autoplist distutils

.include <bsd.port.mk>

One challenge was that genanki’s setup.py required pytest-runner as a build dependency, which doesn’t exist in FreeBSD ports. The solution was to create a patch file that removes this requirement:

--- setup.py.orig 2026-01-11 15:32:48.887894000 +0100

+++ setup.py 2026-01-11 15:32:51.336128000 +0100

@@ -27,9 +27,6 @@

'chevron',

'pyyaml',

],

- setup_requires=[

- 'pytest-runner',

- ],

tests_require=[

'pytest>=6.0.2',

],

The FSRS port followed a similar pattern, with its own set of dependencies to map to FreeBSD ports.

Both ports are available in my GitHub repository and have been submitted to FreeBSD Bugzilla for review:

One of the biggest challenges in packaging is mapping upstream dependencies to existing packages in the target ecosystem. For FreeBSD, this meant:

/usr/ports for existing Python packages@${PY_FLAVOR} suffix for Python version flexibilitychevron) that weren’t immediately obvious from the package metadataPython packaging has evolved significantly, with projects using various combinations of:

setup.py with setuptoolspyproject.toml with various backends (setuptools, flit, hatch, poetry)setup_requires patterns that don’t translate well to system packagingCreating patches to work around these issues is a normal part of the porting process.

Running a FreeBSD VM (via VirtualBox) proved essential for testing ports before submission. The build process can reveal missing dependencies, incorrect paths, and other issues that only appear in the actual target environment.

| Package | Version | AUR | FreeBSD |

|---|---|---|---|

| python-fsrs / py-fsrs | 6.3.0 | ✅ Published | 📝 Submitted |

| python-genanki / py-genanki | 0.13.1 | ✅ Published | 📝 Submitted |

| python-ankipandas | 0.3.15 | ✅ Published | 🔜 Planned |

If you use these tools on Arch Linux or FreeBSD, I’d love to hear your feedback. And if you’re interested in contributing to open-source packaging:

pkg query -e %m=ports@FreeBSD.org %o to find unmaintained ports you have installedEvery package maintained is one less barrier to entry for users who want to use great software without fighting with dependency management.

Published: January 2026

Repository: github.com/chrislongros/freebsd-ports

lsblk -o NAME,FSTYPE,LABEL,MOUNTPOINT,SIZE,MODEL

changes and updates relevant to users of binary FreeBSD releases:

Changes to this file should not be MFCed.

cd240957d7ba

Making a connection to INADDR_ANY (i.e., using INADDR_ANY as an alias

for localhost) is now disabled by default. This functionality can be

re-enabled by setting the net.inet.ip.connect_inaddr_wild sysctl to 1.b61850c4e6f6

The bridge(4) sysctl net.link.bridge.member_ifaddrs now defaults to 0,

meaning that interfaces added to a bridge may not have IP addresses

assigned. Refer to bridge(4) for more information.44e5a0150835, 9a37f1024ceb:

A new utility sndctl(8) has been added to concentrate the various

interfaces for viewing and manipulating audio device settings (sysctls,

/dev/sndstat), into a single utility with a similar control-driven

interface to that of mixer(8).93a94ce731a8:

ps(1)’s options ‘-a’ and ‘-A’, when combined with any other one

affecting the selection of processes except for ‘-X’ and ‘-x’, would

have no effect, in contradiction with the rule that one process is

listed as soon as any of the specified options selects it (inclusive

OR), which is both mandated by POSIX and arguably a natural expectation.

This bug has been fixed.As a practical consequence, specifying '-a'/'-A' now causes all processes to be listed regardless of other selection options (except for '-X' and '-x', which still apply). In particular, to list only processes from specific jails, one must not use '-a' with '-J'. Option '-J', contrary to its apparent initial intent, never worked as a filter in practice (except by accident with '-a' due to the bug), but instead as any other selection options (e.g., '-U', '-p', '-G', etc.) subject to the "inclusive OR" rule.995b690d1398:

ps(1)’s ‘-U’ option has been changed to select processes by their real

user IDs instead of their effective one, in accordance with POSIX and

the use case of wanting to list processes launched by some user, which

is expected to be more frequent than listing processes having the rights

of some user. This only affects the selection of processes whose real

and effective user IDs differ. After this change, ps(1)’s ‘-U’ flag

behaves differently then in other BSDs but identically to that of

Linux’s procps and illumos.1aabbb25c9f9:

ps(1)’s default list of processes now comes from matching its effective

user ID instead of its real user ID with the effective user ID of all

processes, in accordance with POSIX. As ps(1) itself is not installed

setuid, this only affects processes having different real and effective

user IDs that launch ps(1) processes.f0600c41e754-de701f9bdbe0, bc201841d139:

mac_do(4) is now considered production-ready and its functionality has

been considerably extended at the price of breaking credentials

transition rules’ backwards compatibility. All that could be specified

with old rules can also be with new rules. Migrating old rules is just

a matter of adding “uid=” in front of the target part, substituting

commas (“,”) with semi-colons (“;”) and colons (“:”) with greater-than

signs (“>”). Please consult the mac_do(4) manual page for the new rules

grammar.02d4eeabfd73:

hw.snd.maxautovchans has been retired. The commit introduced a

hw.snd.vchans_enable sysctl, which along with

dev.pcm.X.{play|rec}.vchans, from now on work as tunables to only

enable/disable vchans, as opposed to setting their number and/or

(de-)allocating vchans. Since these sysctls do not trigger any

(de-)allocations anymore, their effect is instantaneous, whereas before

we could have frozen the machine (when trying to allocate new vchans)

when setting dev.pcm.X.{play|rec}.vchans to a very large value.7e7f88001d7d:

The definition of pf’s struct pfr_tstats and struct pfr_astats has

changed, breaking ABI compatibility for 32-bit powerpc (including

powerpcspe) and armv7. Users of these platforms should ensure kernel

and userspace are updated together.5dc99e9bb985, 08e638c089a, 4009a98fe80:

The net.inet.{tcp,udp,raw}.bind_all_fibs tunables have been added.

They modify socket behavior such that packets not originating from the

same FIB as the socket are ignored. TCP and UDP sockets belonging to

different FIBs may also be bound to the same address. The default

behavior is unmodified.f87bb5967670, e51036fbf3f8:

Support for vinum volumes has been removed.8ae6247aa966, cf0ede720391d, 205659c43d87bd, 1ccbdf561f417, 4db1b113b151:

The layout of NFS file handles for the tarfs, tmpfs, cd9660, and ext2fs

file systems has changed. An NFS server that exports any of these file

systems will need its clients to unmount and remount the exports.1111a44301da:

Defer the January 19, 2038 date limit in UFS1 filesystems to

February 7, 2106. This affects only UFS1 format filesystems.

See the commit message for details.07cd69e272da:

Add a new -a command line option to mountd(8).

If this command line option is specified, when

a line in exports(5) has the -alldirs export option,

the directory must be a server file system mount point.0e8a36a2ab12:

Add a new NFS mount option called “mountport” that may be used

to specify the port# for the NFS server’s Mount protocol.

This permits a NFSv3 mount to be done without running rpcbind(8).b2f7c53430c3:

Kernel TLS is now enabled by default in kernels including KTLS

support. KTLS is included in GENERIC kernels for aarch64,

amd64, powerpc64, and powerpc64le.f57efe95cc25:

New mididump(1) utility which dumps MIDI 1.0 events in real time.ddfc6f84f242:

Update unicode to 16.0.0 and CLDR to 45.0.0.b22be3bbb2de:

Basic Cloudinit images no longer generate RSA host keys by default for

SSH.000000000000:

RSA host keys for SSH are deprecated and will no longer be generated

by default in FreeBSD 16.0aabcd75dbc2:

EC2 AMIs no longer generate RSA host keys by default for SSH. RSA

host key generation can be re-enabled by setting sshd_rsa_enable=”YES”

in /etc/rc.conf if it is necessary to support very old SSH clients.a1da7dc1cdad:

The SO_SPLICE socket option was added. It allows TCP connections to

be spliced together, enabling proxy-like functionality without the

need to copy data in and out of user memory.fc12c191c087:

grep(1) no longer follows symbolic links by default for

recursive searches. This matches the documented behavior in

the manual page.e962b37bf0ff:

When running bhyve(8) guests with a boot ROM, i.e., bhyveload(8) is not

used, bhyve now assumes that the boot ROM will enable PCI BAR decoding.

This is incompatible with some boot ROMs, particularly outdated builds

of edk2-bhyve. To restore the old behavior, add

“pci.enable_bars=’true’” to your bhyve configuration.Note in particular that the uefi-edk2-bhyve package has been renamed to edk2-bhyve.43caa2e805c2:

amd64 bhyve(8)’s “lpc.bootrom” and “lpc.bootvars” options are

deprecated. Use the top-level “bootrom” and “bootvars” options

instead.822ca3276345:

byacc was updated to 20240109.21817992b331:

ncurses was updated to 6.5.1687d77197c0:

Filesystem manual pages have been moved to section four.

Please check ports you are maintaining for crossreferences.8aac90f18aef:

new MAC/do policy and mdo(1) utility which enables a user to

become another user without the requirement of setuid root.7398d1ece5cf:

hw.snd.version is removed.a15f7c96a276,a8089ea5aee5:

NVMe over Fabrics controller. The nvmft(4) kernel module adds

a new frontend to the CAM target layer which exports ctl(4)

LUNs as NVMe namespaces to remote hosts. The nvmfd(8) daemon

is responsible for accepting incoming connection requests and

handing off connected queue pairs to nvmft(4).a1eda74167b5,1058c12197ab:

NVMe over Fabrics host. New commands added to nvmecontrol(8)

to establish connections to remote controllers. Once

connections are established they are handed off to the nvmf(4)

kernel module which creates nvmeX devices and exports remote

namespaces as nda(4) disks.25723d66369f:

As a side-effect of retiring the unit.* code in sound(4), the

hw.snd.maxunit loader(8) tunable is also retired.eeb04a736cb9:

date(1) now supports nanoseconds. For example:date -Insprints “2024-04-22T12:20:28,763742224+02:00” anddate +%Nprints “415050400”.6d5ce2bb6344:

The default value of the nfs_reserved_port_only rc.conf(5) setting has

changed. The FreeBSD NFS server now requires the source port of

requests to be in the privileged port range (i.e., <= 1023), which

generally requires the client to have elevated privileges on their local

system. The previous behavior can be restored by setting

nfs_reserved_port_only=NO in rc.conf.aea973501b19:

ktrace(2) will now record detailed information about capability mode

violations. The kdump(1) utility has been updated to display such

information.f32a6403d346:

One True Awk updated to 2nd Edition. See https://awk.dev for details

on the additions. Unicode and CSVs (Comma Separated Values) are now

supported.fe86d923f83f:

usbconfig(8) now reads the descriptions of the usb vendor and products

from usb.ids when available, similarly to what pciconf(8) does.4347ef60501f:

The powerd(8) utility is now enabled in /etc/rc.conf by default on

images for the arm64 Raspberry Pi’s (arm64-aarch64-RPI img files).

This prevents the CPU clock from running slow all the time.0b49e504a32d:

rc.d/jail now supports the legacy variable jail_${jailname}zfs_dataset to allow unmaintained jail managers like ezjail to make use of this feature (simply rename jail${jailname}zfs_datasets in the ezjail config to jail${jailname}_zfs_dataset.e0dfe185cbca:

jail(8) now support zfs.dataset to add a list of ZFS datasets to a

jail.61174ad88e33:

newsyslog(8) now supports specifying a global compression method directly

at the beginning of the newsyslog.conf file, which will make newsyslog(8)

to behave like the corresponding option was passed to the newly added

‘-c’ option. For example:<compress> none906748d208d3:

newsyslog(8) now accepts a new option, ‘-c’ which overrides all historical

compression flags by treating their meaning as “treat the file as compressible”

rather than “compress the file with that specific method.”The following choices are available: * none: Do not compress, regardless of flag. * legacy: Historical behavior (J=bzip2, X=xz, Y=zstd, Z=gzip). * bzip2, xz, zstd, gzip: apply the specified compression method. We plan to change the default to 'none' in FreeBSD 15.0.1a878807006c:

This commit added some statistics collection to the NFS-over-TLS

code in the NFS server so that sysadmins can moditor usage.

The statistics are available via the kern.rpc.tls.* sysctls.7c5146da1286:

Mountd has been modified to use strunvis(3) to decode directory

names in exports(5) file(s). This allows special characters,

such as blanks, to be embedded in the directory name(s).

“vis -M” may be used to encode such directory name(s).c5359e2af5ab:

bhyve(8) has a new network backend, “slirp”, which makes use of the

libslirp package to provide a userspace network stack. This backend

makes it possible to access the guest network from the host without

requiring any extra network configuration on the host.bb830e346bd5:

Set the IUTF8 flag by default in tty(4).128f63cedc14 and 9e589b093857 added proper UTF-8 backspacing handling in the tty(4) driver, which is enabled by setting the new IUTF8 flag through stty(1). Since the default locale is UTF-8, enable IUTF8 by default.ff01d71e48d4:

dialog(1) has been replaced by bsddialog(1)41582f28ddf7:

FreeBSD 15.0 will not include support for 32-bit platforms.

However, 64-bit systems will still be able to run older 32-bit

binaries.Support for executing 32-bit binaries on 64-bit platforms via COMPAT_FREEBSD32 will remain supported for at least the stable/15 and stable/16 branches. Support for compiling individual 32-bit applications via `cc -m32` will also be supported for at least the stable/15 branch which includes suitable headers in /usr/include and libraries in /usr/lib32. Support for 32-bit platforms in ports for 15.0 and later releases is also deprecated, and these future releases may not include binary packages for 32-bit platforms or support for building 32-bit applications from ports. stable/14 and earlier branches will retain existing 32-bit kernel and world support. Ports will retain existing support for building ports and packages for 32-bit systems on stable/14 and earlier branches as long as those branches are supported by the ports system. However, all 32-bit platforms are Tier-2 or Tier-3 and support for individual ports should be expected to degrade as upstreams deprecate 32-bit platforms. With the current support schedule, stable/14 will be EOLed 5 years after the release of 14.0. The EOL of stable/14 would mark the end of support for 32-bit platforms including source releases, pre-built packages, and support for building applications from ports. Given an estimated release date of October 2023 for 14.0, support for 32-bit platforms would end in October 2028. The project may choose to alter this approach when 15.0 is released by extending some level of 32-bit support for one or more platforms in 15.0 or later. Users should use the stable/14 branch to migrate off of 32-bit platforms.

“The X86_NATIVE_CPU Kconfig build time option has been merged for the Linux 6.16 merge window as an easy means of enforcing “-march=native” compiler behavior on AMD and Intel processors to optimize your kernel build for the local CPU architecture/family of your system.

The CONFIG_X86_NATIVE_CPU option is honored if compiling the Linux x86_64 kernel with GCC or LLVM Clang when using Clang 19 or newer due to a compiler bug with the Linux kernel on older compiler versions.”

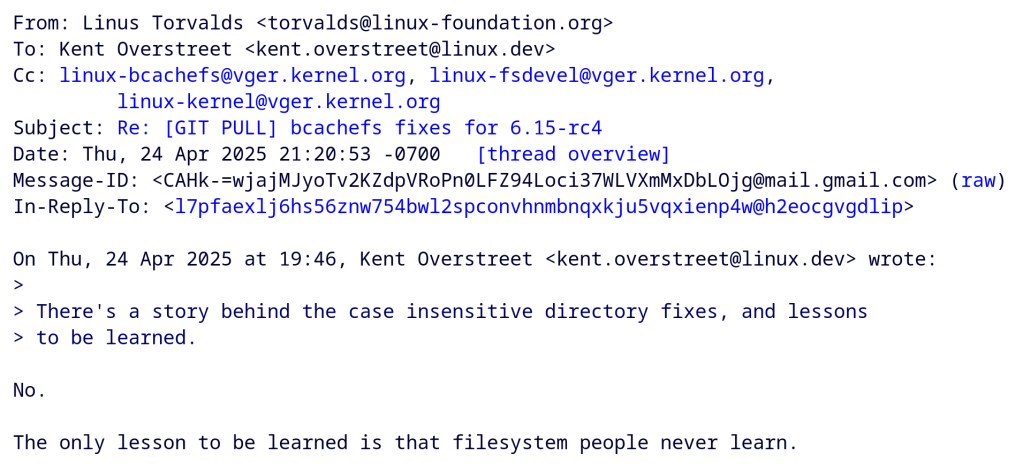

“Dammit. Case sensitivity is a BUG. The fact that filesystem people still think it’s a feature, I cannot understand. It’s like they revere the old FAT filesystem so much that they have to recreate it – badly.

Hint: ❤ and ❤️ are two unicode characters that differ only in ignorable code points. And guess what? The cray-cray incompetent people who want those two to compare the same will then also have other random – and perhaps security-sensitive – files compare the same, just because they have ignorable code points in them.”

https://lore.kernel.org/lkml/CAHk-=wjajMJyoTv2KZdpVRoPn0LFZ94Loci37WLVXmMxDbLOjg@mail.gmail.com/

An article from XDA:

https://www.xda-developers.com/used-open-source-software-lessons-learned/